Responsible Data Practices LLM-Friendly SEO Series Part 6

Everyone is rushing to ensure their Magento store is visible to the new wave of Large Language Models (LLMs). The panic is understandable.

Author

Bryan Mull

Date

Category

SEO/GEO

Introduction

If ChatGPT or Perplexity can’t read your product data, you lose out on the next generation of search traffic. But in the haste to open the doors to AI crawlers, too many businesses are ripping the hinges off entirely.

Optimizing for LLMs is not just about visibility; it is about control. When you grant access to your site’s data, you are handing over information that will be processed, stored, and potentially regurgitated to millions of users. If you aren’t careful, you might accidentally train an AI on your wholesale pricing tiers, your staging environment, or worst of all, your customer data.

At Digital Mully, we treat data responsibility as a structural necessity, not a philosophical “nice-to-have.” We have seen the logs where aggressive scrapers brought high-volume Magento sites to their knees, and we have fixed the robots.txt files that were inadvertently exposing internal dashboards. Here is how to build an infrastructure that welcomes the right traffic while locking down the rest.

Clearly Mark Public vs. Private Information

Your first line of defense is technical, not legal. You must implement a systematic classification of your site architecture that tells crawlers explicitly where they are allowed to go. This is particularly critical for Magento and Adobe Commerce installations, which generate thousands of URL variations, customer account pages, and checkout flows that have zero business being in a public dataset.

We frequently see development teams leave staging environments open to indexing. This confuses LLMs (and Google) with duplicate content, but it also exposes future product launches and development distinctives to the public web. You need to use robots.txt directives strictly. Don’t rely on a crawler’s “goodwill.” Block access to /checkout/, /customer/, and /catalogsearch/ directories at the root level.

Beyond the robots.txt file, use the X-Robots-Tag HTTP header for non-HTML files like PDFs or technical documentation that you don’t want ingested. For content you do want found—like product descriptions and blog posts—use structured data markup (JSON-LD) to spoon-feed context to the bots. This binary approach—hard blocks for private data, structured invitations for public data—creates a boundary that machines can respect.

Compliance in the Age of Scrapers

Data protection regulations like GDPR and CCPA weren’t written with LLMs in mind, but they absolutely apply to how you manage data accessibility. If an AI scrapes your site and ingests Personally Identifiable Information (PII) from a user review section or a publicly visible customer forum, you have lost control of that data. You cannot easily ask an LLM to “forget” what it has learned.

We advise our clients to aggressively sanitize any user-generated content before it renders on the frontend. If you display reviews, ensure names are anonymized or abbreviated. Review your terms of service to establish clear consent mechanisms for how public data might be processed.

This is also why we emphasize strict data retention policies in our technical audits. If you are holding onto historical customer data on a public-facing server that you don’t need, you are increasing your surface area for risk. Automated purging of sensitive logs and rigorous database management ensures that even if a crawler ignores your directives, there is nothing compromising for it to find.

The Infrastructure Threat: Rate Limiting

This is the most immediate technical pain point we are seeing right now. AI crawlers are voracious. Unlike a human user who browses page by page, or a Google bot that generally respects crawl budgets, many third-party scrapers will hit your site with thousands of requests per minute.

For a Magento site, which is resource-intensive by nature, this is a performance killer. We have seen unverified bots hammer dynamic search pages, bypassing Varnish caching and hitting the database directly. The result is a slow site for real, paying customers, or a complete server crash during peak traffic.

You must deploy intelligent throttling. Simple IP blocking isn’t enough anymore because scrapers use rotating proxies. You need a Web Application Firewall (WAF) configured to distinguish between legitimate crawlers (like Googlebot or GPTBot) and aggressive scrapers. Implement HTTP 429 (Too Many Requests) status codes with retry-after headers.

If you don’t control the rate at which AI consumes your site, you are paying for the server costs to train someone else’s model. We implement strict rate limiting rules at the edge (using tools like Fastly or Cloudflare) to ensure that your infrastructure prioritizes revenue-generating traffic first.

Curating Data for AI Consumption

Once you have secured the private data and protected your infrastructure, you can shift to offense. The most advanced approach to LLM optimization is creating curated datasets specifically for training purposes.

Instead of forcing an LLM to scrape your entire HTML structure to find product specs, you can provide machine-readable metadata that defines exactly what you want known. This includes clear licensing frameworks. If you have proprietary data—market research, unique product attributes, or pricing algorithms—you can establish terms regarding its use.

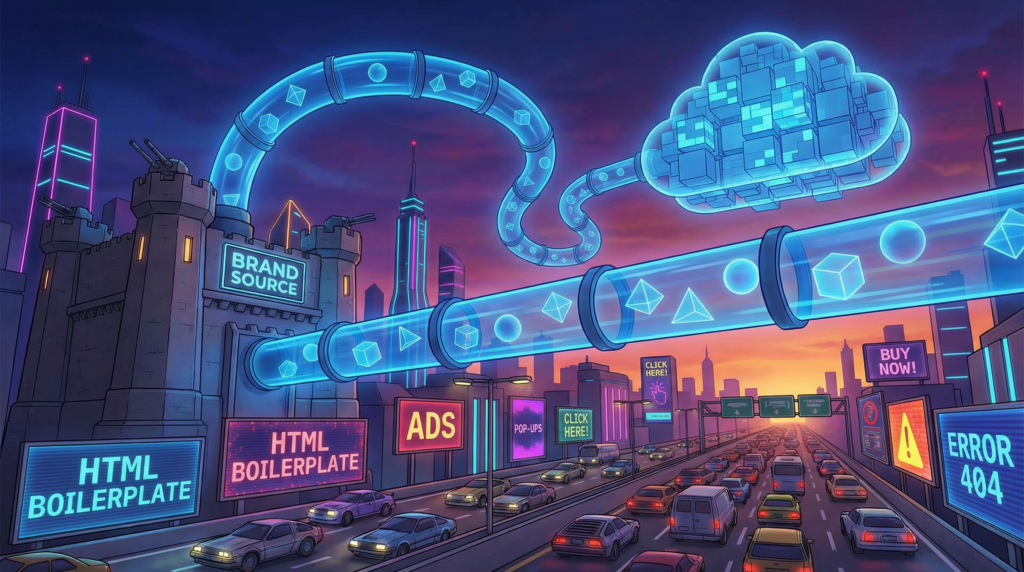

For our larger partnership clients, we look at creating specific endpoints or feeds designed for ingestion. This ensures the AI gets the highest fidelity version of your brand story without the noise of HTML boilerplate. It allows you to verify the provenance of the data and ensures that when an AI answers a question about your brand, it’s referencing a source you control.

The Bottom Line

Responsible data practices build trust. When you clearly delineate what is public and what is private, you protect your customers and your server bills.

You don’t need to choose between blocking AI entirely and letting it run wild on your server. You need a technical framework that enforces boundaries. If you suspect your current setup is leaking data or if your server loads are spiking due to unexplained bot traffic, it is time to audit your access controls.

Ready to ensure your Magento store is accessible to LLMs?